A recent preprint paper, Forecasting Future Language: Context Design for Mention Markets, authored by a cross-institutional team that includes researchers from MIT, UC Berkeley, Seoul National University, and Kalshi, examined how input context should be designed to support accurate prediction in mention markets.

The researchers introduce a technique they refer to as Market-Conditioned Prompting (MCP). MCP treats “the market-implied probability as a prior and instructs the LLM to update this prior using textual evidence.” In other words, they use the current market price of a contract as a baseline and -- after giving the LLM context -- they ask the LLM to determine if that price is too high, too low, or fair.

The primary variables in their study were:

the contextual information that was provided to the LLM, which, in this case, consisted of news and/or prior earnings-call transcripts, and

how market probability (the contract price) was used.

They tested three variants:

market probability with context,

market probability framed as a prior with context, and

a blend of the market price and the MCP output (70% weight market, 30% on MCP result)

…on 856 Kalshi earnings-call mention market contracts that spanned 50 companies and 70 earnings events from April through December 2025. The forecast cutoff was set at 7 days before each earnings call, and the LLM (GPT 5.1) was given up to 100 company-related news articles and the prior quarters’ earnings call transcripts as context.

One of their primary findings was that “richer context consistently improves forecasting performance.”

This makes sense, both when it comes to prediction more generally and when providing context to LLMs. The quality of your inputs matters. If you’re making dinner how your meal ends up will ultimately be constrained by the quality of your ingredients. It’s the same case here. Better context leads to better results.

Now, regarding the type of inputs, the researchers found that providing relevant news to the LLMs helped, and that including prior transcripts yielded larger gains than news alone.

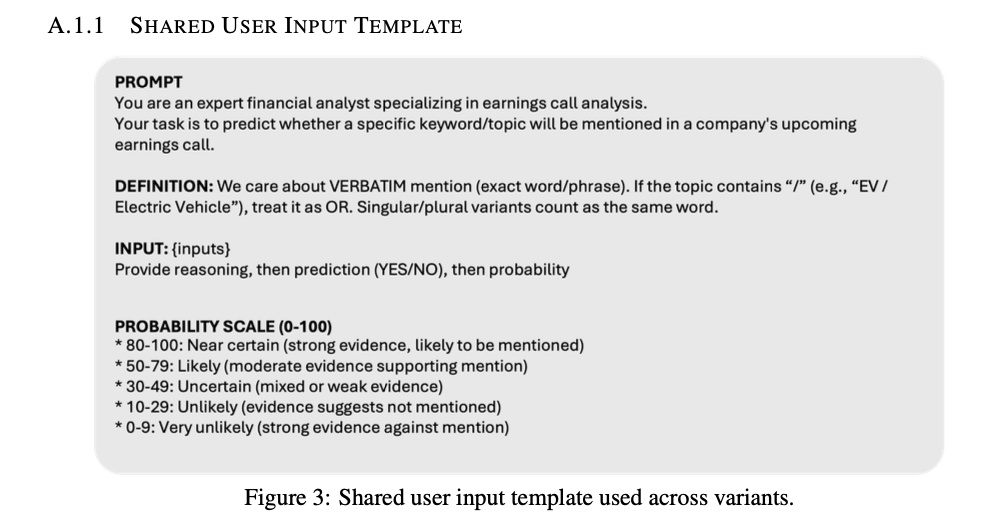

They also found that MCP improves over naive use of market probability. When they simply asked the LLM to predict the probability a particular word would be mentioned, they used the following prompt:

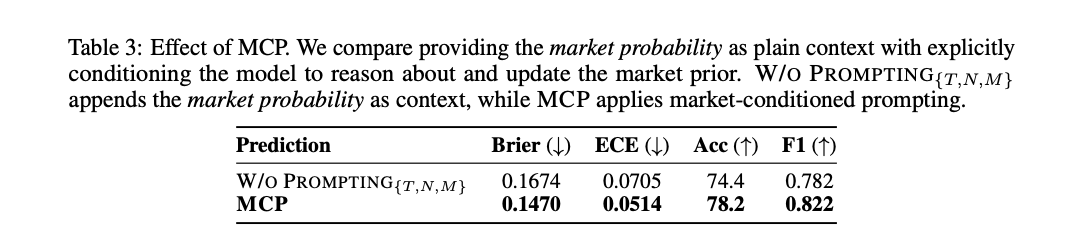

As you’ll see in a table below, this underperformed the market. This is interesting because even with transcripts, news, and market probability as context, without instruction to assess or revise the market signal, the LLM underperformed the market baseline.

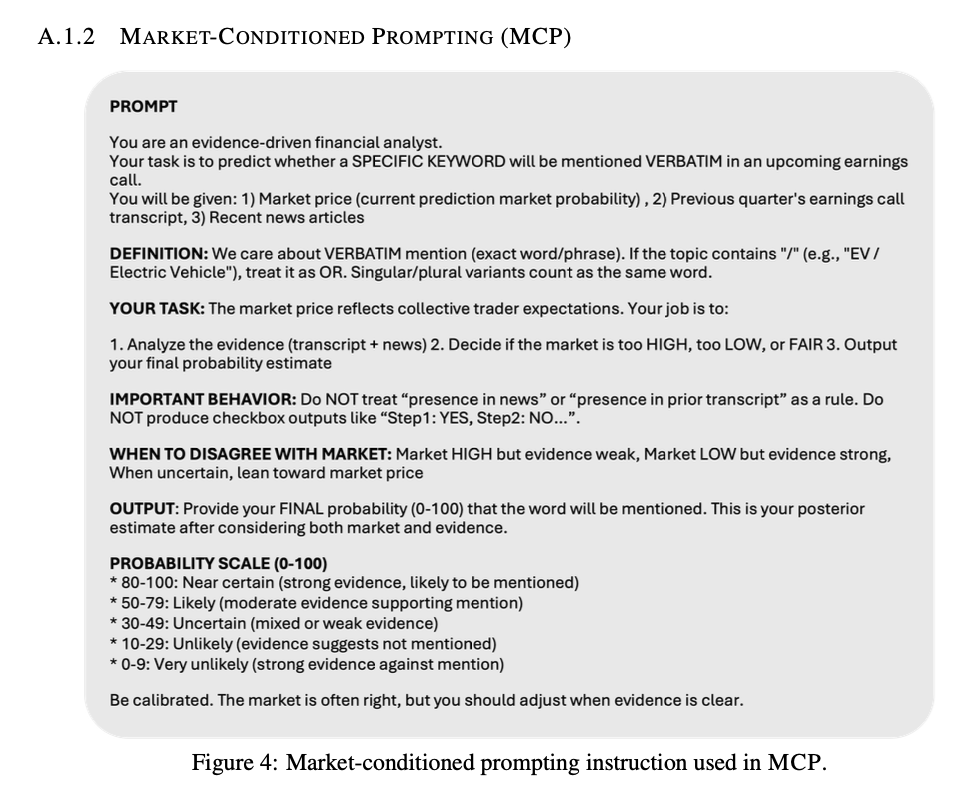

Researchers also tested with prompting (i.e., their MCP method), where they framed the market probability as a prior to be evaluated and updated. Here they used this prompt:

They found that their MCP method yields better-calibrated forecasts than just giving the LLM access to the probability itself. They also found that MCP does best when market signals are uncertain:

When market probabilities fall in the 50–60% range, MCP achieves lower per-instance Brier scores in 17 out of 30 cases (56.7%), and this advantage becomes even more pronounced in the 60–70% range, where MCP wins 5 out of 8 cases (62.5%). These results show that MCP effectively leverages additional evidence to refine market forecasts precisely when the prior is least decisive.

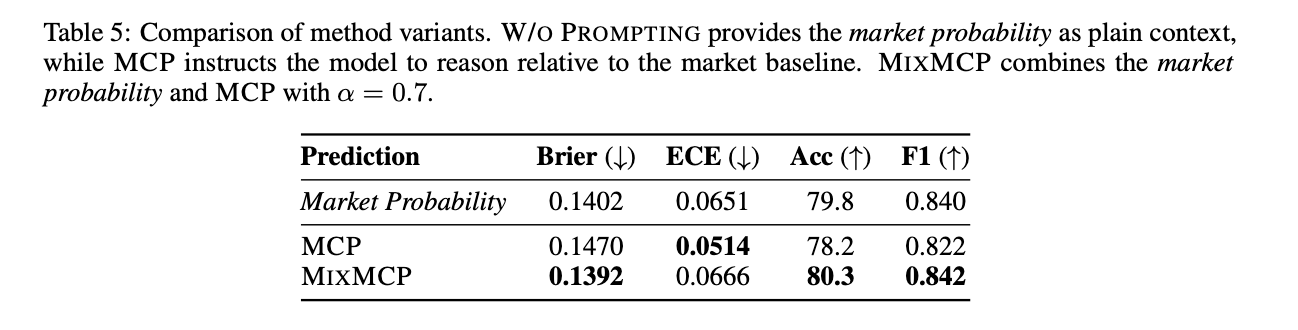

You can see their comparison below:

At an intuitive level, this makes sense. In order to identify whether or not a market is mispriced, you need to identify information that (presumably) the market hasn’t priced in. But as discussed above, just having this information isn’t enough to beat the market baseline. You need to properly instruct the model about what you want it to do with it.

Just having the information isn’t particularly useful if you don’t know what to do with it.

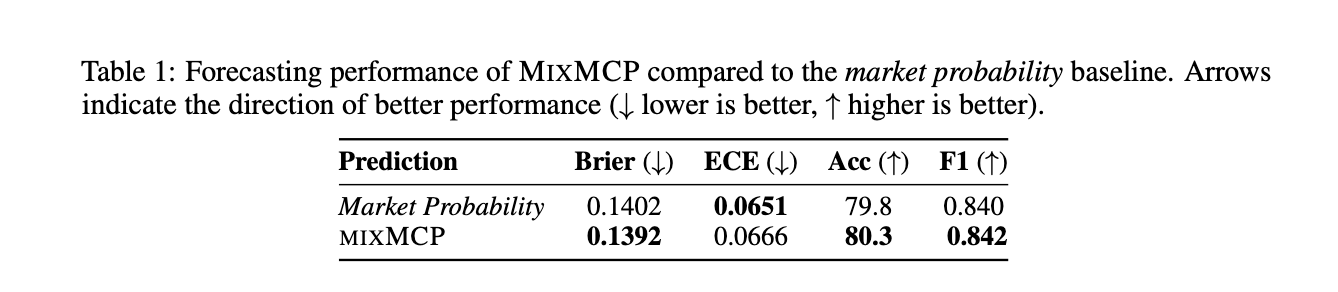

They also used what they call MixMCP. The idea of MixMCP is to correct for potential overreactions LLMs might make to weak or noisy cues. To account for this, researchers used a convex mixture that anchors on the market, as seen below:

Where:

The piMKT is the market price, and

piMCP is the market probability on a 0-100 scale that the LLM provided as an output

They chose to use: α = 0.7

They found that MixMCP consistently outperforms the market baseline, and that “by dampening the LLM’s posterior update with the market prior, MixMCP yields more robust predictions than either the market or the LLM alone.”

However, the improvement in Brier score from Market Probability to Mix MCP is 0.1402 to 0.1392. This improvement of 0.001 is extremely small and doesn’t have much statistical significance. The accuracy improvement is 79.8% to 80.3%, which is about 4 additional correct predictions out of 856.

Now, there are some limitations at play here.

The researchers explicitly point out that this work doesn’t necessarily generalize to other contract types. The researchers are right when they point out that LLMs are well suited for earnings call mention markets because the job here is predicting what might be said based on past context, which is -- to put it simply -- essentially what LLMs do.

The impact of the MixMCP might also be overstated. When you take a convex combination of two imperfectly correlated forecasters, the blend almost always produces a lower Brier score than the worse of the two, which happens even if the LLM has zero real informational edge.

For future studies, it would also be interesting to consider:

how other LLMs would perform;

to look more closely at how factors like trading volume, liquidity, and number of traders impact the outcome; and

how this would compare to a human baseline (essentially asking the question: How would an LLM compare to an individual forecaster reading the same material?)

One thing this preprint leaves me considering is the question of how much of the edge here actual coming from the LLM.

Is the LLM actually extracting any signal that couldn’t otherwise be found from just reading through the material provided to it? Again, the information and the LLM alone didn’t result in a better outcome. The LLM is missing “signal” when it isn’t prompted to consider a prior. This would indicate that the LLM doesn’t necessarily know to ask what it doesn’t know to ask. I’m left wondering what other opportunities for edge are missed if someone overrelies on an LLM for their analysis.

The market composition matters a lot. Whether the market participants are highly informed or taking positions that are more aligned with gambling will greatly impact the initial market price. If the participant pool is small and not deeply informed, the “market baseline” is weaker than it sounds, and any improvement may just reflect beating a thin market rather than beating the crowd’s wisdom.

But I still think there are some concepts from this that are helpful to keep in mind when approaching any market…